What are Microarchitecture Attacks?

Microarchitecture attacks are a class of hardware-based attacks that exploit how modern CPUs internally execute instructions rather than bugs in software logic. They take advantage of performance features—such as caching, speculative execution, branch prediction, and out-of-order execution—to leak sensitive information (like cryptographic keys or passwords) through side channels.

The Distinction: Software vs. Hardware

- Traditional Software Attacks (e.g., Buffer Overflows): Exploit coding errors or logic flaws in the software itself.

- Microarchitecture Attacks: Exploit the physical implementation of the Instruction Set Architecture (ISA). They abuse the way a CPU optimizes execution to leak data across privilege boundaries.

Why are they dangerous?

- Bypass Security Layers: They can bypass OS and application-level isolation.

- Stealthy: They often work without crashing programs or leaving traditional logs.

- Hard to Patch: Mitigations often require firmware updates that can significantly reduce performance.

The Core Concept: Performance vs. Security

To understand these attacks, we must look at how modern processors are designed. The primary goal of a CPU is speed. To achieve this, it uses several optimizations:

- Guess future instructions (Speculation)

- Execute instructions out of order

- Share caches across processes

- Avoid waiting for memory

The Trade-off: These optimizations create side effects. Microarchitecture attacks abuse these side effects to infer data.

How do they work?

These attacks do not directly “read” protected memory. Instead, they infer secrets by observing two main factors:

- Timing Differences: fast vs. slow access.

- Hardware State Changes: Cache hits vs. misses.

A Simple Example: The Cache Side Channel

Imagine we force a specific memory address ($A$) to be removed from the CPU cache (making it slow to access).

- Attacker flushes $A$ from cache.

- Victim runs a program.

- Attacker tries to read $A$ again.

- If access is FAST: The victim accessed $A$, causing the CPU to load it back into the cache.

- If access is SLOW: The victim did not access $A$.

Here, the attacker never read the victim’s data directly but learned about the victim’s behavior by measuring time.

Anatomy of an Attack

Most microarchitecture attacks follow a three-phase structure:

- Preparation (Setup Phase)

- The attacker puts the CPU into a known state (e.g., flushing the cache).

- Trigger (Victim Execution Phase)

- The victim performs a computation that depends on a secret. This secret influences the hardware state (e.g., loading a specific line into cache).

- Observation & Extraction (Measurement Phase)

- The attacker measures side effects (time) to reconstruct the secret. By repeating this, secrets can be leaked bit-by-bit.

Side Channel vs. Microarchitecture Attacks

It is important to distinguish between broad side-channel attacks and specific microarchitectural ones.

Side Channel Attacks (Broad Category)

Learning secrets from indirect signals, not by breaking logic.

- Signals: Time, Power consumption, Electromagnetic radiation, Sound.

Microarchitecture Attacks (Specific Category)

Side channel attacks that specifically exploit CPU internal design features.

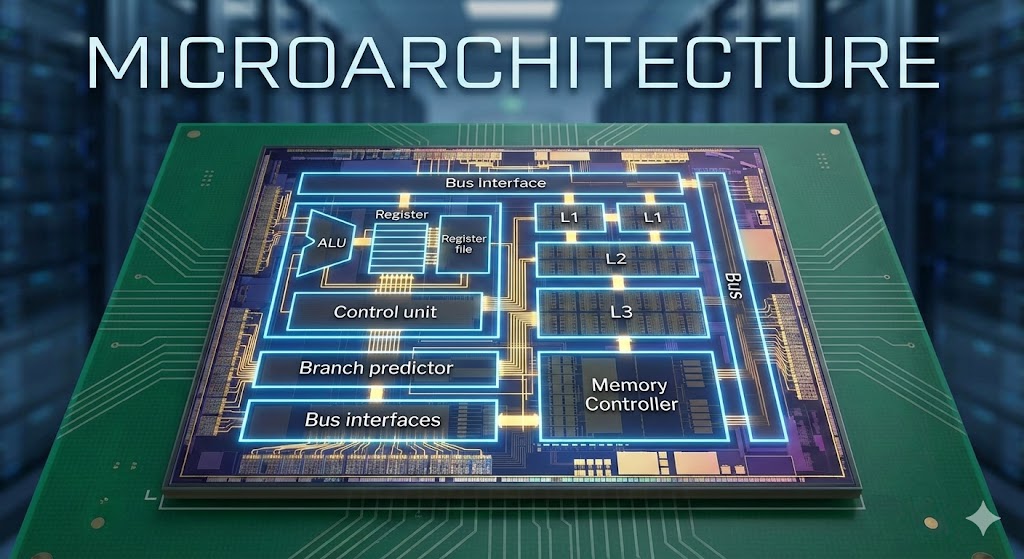

- Components: Cache, Branch Predictors, Speculative Execution, Pipelines.

Rule of Thumb: All microarchitecture attacks are side-channel attacks, but not all side-channel attacks are microarchitectural.

Comparison:

- Power Analysis (Side Channel): Measuring power usage during crypto operations. This works even if the CPU has no cache.

- Cache Timing (Microarchitecture): Measuring time differences caused by cache hits/misses. This relies on the Cache, an internal CPU optimization component.

The 5 Pillars of CPU Optimization

These five features are the root cause of the side effects used in attacks.

1. Cache

A small, ultra-fast memory inside the CPU.

- Function: Keeps a copy of recently used data so the CPU doesn’t have to wait for slow RAM.

- Side Effect: Accessing cached data is measurably faster than non-cached data.

2. Branch Prediction

A “guesser” inside the CPU.

- Function: When the code reaches a conditional branch (e.g.,

if (x < 10)), the CPU doesn’t want to wait for the condition to be calculated. The predictor guesses the path and starts working immediately. - Outcome:

- Correct Guess: Time saved ($Fast$).

- Wrong Guess: Work thrown away ($Slower$).

3. Speculative Execution

“Acting before you are 100% sure.”

- Function: Working ahead based on the Branch Predictor’s guess.

- The Flaw: Even if the guess is wrong and the CPU “undoes” the logical work, physical traces (like data left in the cache) remain. This is the heart of Spectre.

4. Pipeline

- Function: Breaks instructions into stages so the CPU can work on multiple instructions simultaneously (like a factory assembly line).

- Side Effect: Contention for pipeline stages can reveal information about what instructions are running.

5. Execution Units

- Function: Specialized workers (ALUs, FPUs) that do the actual math.

- Side Effect: If two processes compete for the same execution unit, they can slow each other down, revealing activity patterns.

The Leakage Chain

Why does this matter? Because specific internal behaviors translate directly into information leakage.

- Private Value (The Secret) $\downarrow$

- Program Choice (Branch/Index) $\downarrow$

- CPU Internal Feature Reacts (Cache/Predictor) $\downarrow$

- Fast or Slow Behavior (Side Effect) $\downarrow$

- Time Difference $\downarrow$

- Observer Learns the Secret

All major CPU performance features create fast/slow behavior, and time observation turns that behavior into information leakage.

A Preview of Spectre

Spectre is the most famous example of a microarchitectural attack. It abuses Speculative Execution:

- The CPU sees a choice.

- It guesses the path and starts executing code that accesses secret memory.

- Later, it realizes the guess was wrong and “rolls back” the changes.

- However, the secret data loaded into the cache during the “wrong guess” remains.

- The attacker measures access times to retrieve the secret.

In the next part of this series, we will dive deeper into Cache Attacks and how Flush+Reload works in practice.

Discussion